A Perspective on Particle Physics

Philip Yock

The chance is high that the truth lies in the fashionable direction. But, on the off chance that it is in another direction — a direction obvious from an unfashionable view of field theory — who will find it?Richard Feynman, Nobel Lecture, 11 December 1965.

Introduction

The fundamental nature of matter has intrigued the minds of philosophers and scientists for centuries, but the greatest advances in our understanding occurred in a short burst about 100 years ago. A golden period extending from the end of the 19th century through the first third of the 20th century saw the major discoveries on which much of today’s science is based. The constituents of atoms (electron and nuclei) were found, and also the laws governing their interactions (quantum mechanics and special relativity). By implication, the properties of molecules and larger structures could be explained, at least in principle. No exceptions were subsequently found to this legacy from the golden era.

The reductionist path that followed was aimed at uncovering the properties of the particles comprising the atomic nucleus, the proton and the neutron. This led to the unexpected discovery of a whole new world of particles of which the proton and the neutron were merely two rather inconspicuous members. Using the observed properties of the new particles as a starting point, a theory of matter was formulated in the 1960s and 1970s which is now known as the “Standard Model”. This is consistent with many experimental results, but it includes several adjustable parameters, some surprising assumptions and also some conflicts with observation.

This essay attempts to review the above situation. We commence with a brief summary of the main developments that led to the Standard Model. This includes the pioneering discoveries by Rutherford and his contemporaries, and follows with contributions by Yukawa, Anderson, Dirac, Rabbi, Feynman, Schwinger, Gell-Mann, Higgs and others. Not all of the major contributions are included – an impossible task in a brief essay. Most of the included material appeared in courses delivered by the author at the University of Auckland over a number of years.

Two alternative approaches particle physics, due primarily to Schwinger and the author, are then presented. It is shown how the developments of the alternative approaches dovetail with those of the Standard Model. Their strengths and weaknesses in comparison to the Standard Model are discussed. Experimental tests specifically designed for them are described. Finally, a new approach for testing all theories that could perhaps become feasible in the future is noted.

First, a historial review of the main observations that led to the standard model.

The atomic nucleus

We commence this essay in the 20th century with the 1897 discovery of the electron by J. J Thomson behind us. This enables us to start with the exploits of Thomson’s best known student, Ernest Rutherford, who, as every New Zealander knows, discovered the atomic nucleus.

Rutherford fired alpha particles from radioactive sources at various targets in the course of his many table-top experiments and found, to his surprise, that the alpha particles were often deflected through large angles. With a gold target some were scattered backwards through angles greater than 90°. On the basis of the then-prevailing model of the atom, which was due to Thomson, Rutherford likened the back-scattered alpha particles to 15 inch shells being reflected by tissue paper. Something was amiss with his teacher’s theory! Rutherford correctly surmised that the atom contained a heavy nucleus at its centre.1

Rutherford and his colleagues found not only the atomic nucleus but also the constituents of which it is comprised - protons and neutrons. And they made the first, admittedly approximate, measurements of the sizes of nuclear particles. They found that protons and neutrons are about the same in size, approximately 100,000 times smaller than an atom, and that the nucleus may be approximated as a system of closely packed spheres, as shown in Figure 1.

A UK contemporary of Rutherford, Francis Aston, and other physicists of the day, developed a method for weighing atomic nuclei, and found they were slightly less massive than the sum of the masses of their constituent particles.3 The mass difference was ascribed to the binding energy of the nucleus, and found to be millions of times greater than the binding energies of electrons in atoms. Thus was born today’s atomic model of matter and the nuclear industry.

The achievements of Rutherford and his contemporaries are truly outstanding. The results are indisputable, and the experiments were performed with budgets many orders of magnitude smaller than those of today’s particle physicists. The techniques they developed are still in use.

The nuclear force

During the 1930s Hideko Yukawa, a theoretical physicist at Kyoto, proposed a mechanism to account for Rutherford’s picture of the atomic nucleus. Yukawa considered the nature of the strong force that holds the neutrons and protons tightly together in the nucleus. He based his considerations on the relativistic version of quantum mechanics known as “quantum field theory”. In this theory, every force-field has a particle or “quantum” associated with it. The quantum of the electromagnetic field, for example, is the photon. Yukawa assumed the existence of a quantum of the nuclear force field that holds the atomic nucleus together.4

From the size of the nucleus, as measured by Rutherford and co-workers, Yukawa was able to predict the mass of the nuclear quantum to be approximately 200 times the mass of the electron.

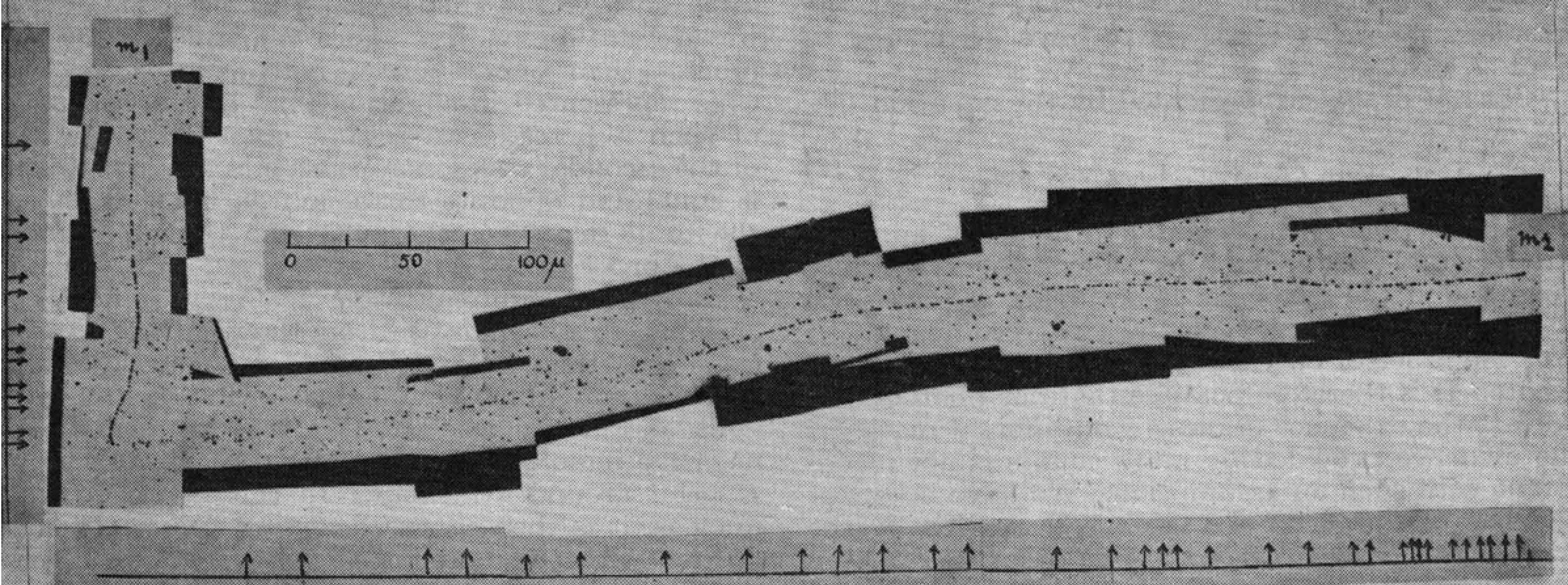

This prediction was brilliantly confirmed in 1947 when a particle with mass 274me was found in the cosmic radiation by Cecil Powell and his associates at Bristol University.5 Powell’s particle was found to interact strongly with matter, as expected. In this way Rutherford’s results were accounted for. Yukawa’s theory was later generalized and detailed tests made, successfully. His particle thus appeared to be the glue that holds the atomic nucleus together, yet today this view is challenged (see later).

Yukawa’s particle is known nowadays as the “pion” or Π. It is unstable, having a lifetime of about 10-8 sec. Pions can therefore only be detected by first creating them - by transmuting energy to mass via Einstein’s E = mc2 relationship - and then observing them before they decay.

Cosmic radiation

From the 1930s to the 1950s the cosmic radiation was a useful source of high-energy particles for many experiments in particle physics. The pion was just one of many particles that was discovered. The cosmic radiation itself was discovered by an Austrian physicist, Victor Hess, in 1913. He carried out a series of high-altitude balloon flights in which he discovered a source of ionizing radiation that intensified with altitude (Figure 3).

For one hundred years, from 1913 to 2013, the source of the cosmic radiation remained a mystery. The remnants of supernovae (exploding stars) were popularly thought to be a likely possibility, and many searches were made, but none proved decisive until very recently.

One of the unsuccessful studies was made from 1987 to 1994 from New Zealand by a Japan/Australia/NZ collaboration (Figure 4). They monitored the supernova SN1987A that occurred in February 1987 in the Large Magellanic Cloud, just 170,000 light years away. SN1987A was the brightest supernova to be seen in the last 400 years, but the search for cosmic rays being emitted by it yielded a negative result.7 The results on SN1987A were however sensitive enough to rule out the hypothesis that young supernova remnants in general are the source of the cosmic radiation.

Very recently the mystery of the cosmic rays may have been cleared up. At the time of writing, results from a space telescope named Fermi provide strong evidence that older remnants of supernovae, some thousands of years old, are the source of the cosmic radiation.8

The muon, who ordered that?

In 1936, eleven years before Powell found the pion, Carl Anderson of California found another unstable, radioactive particle (lifetime about 10-6 sec) in the cosmic radiation9. Anderson’s particle was only slightly lighter than the pion, and at first it was thought it might be Yukawa’s predicted particle. However, it was found that Anderson’s particle did not interact strongly with matter, and that therefore it could not be the particle predicted by Yukawa. Anderson’s particle was later dubbed the “muon” or µ.

The muon was the first particle to be found in nature without any apparent need or purpose. The electron was clearly needed to account for electromagnetic, chemical and biological phenomena, the photon was needed to transmit electromagnetic forces, protons and neutrons were needed to form atomic nuclei, and the pion was needed to glue protons and neutrons together in atomic nuclei.

But in 1936, when Anderson discovered the muon, it seemed to be superfluous. The physicist I.I. Rabbi famously asked “who ordered that?” Today, 77 years later, the question remains unanswered.

Antimatter

In the same type of experiment in which Anderson discovered the muon, he also discovered the anti-electron or “positron”.10 This is a positive particle with the same mass as the electron that annihilates on meeting an electron.

The positron had been predicted to exist by British theoretical physicist Paul Dirac11 in 1928 in a theory that, like Yukawa’s theory, amalgamated the theories of relativity and quantum mechanics, but in a different way from that employed by Yukawa. The predictions of Yukawa and Dirac, extraordinarily brilliant though they were, answered questions rather than raising them. It may yet turn out that the muon holds more telling information on the structure of matter than either the pion or the positron.

Strange particles

The muon turned out to be just the first of a veritable explosion of particles to be discovered from the 1940s onwards for which we know of no obvious need.

In the 1940s and 1950s a group of particles, known as K, Λ, Σ, Ξ and Ω- respectively, was found in cosmic ray experiments, the first of which was carried out in the UK by Butler and Rochester.12 These particles were observed to share several characteristics in common - their lifetimes were of order 10-10 sec, they interacted strongly with one another, and they were produced in association (e.g. a K with a Λ) but never alone.

Because the K, Λ, Σ, Ξ and Ω- interacted strongly, they were dubbed “hadrons”. This distinguished them from other particles, termed “leptons”, which did not interact strongly. With this nomenclature, every particle is by definition either a “hadron” or a “lepton”. Protons, neutrons, pions, and the Λ, Σ, Ξ and Ω- are hadrons, electrons and muons are leptons.

Although hadrons and leptons are distinguished by the strengths of their interactions, they share an important property. There are too many of both of them! Just as we do not know why the muon exists, so also we do not know why the K, Λ, Σ, Ξ and Ω- exist. Collectively, the latter are referred to as the “strange” particles. We therefore have a second puzzle reminiscent of Rabbi’s one – “who needs strange particles?”

Following the discovery of the strange particles, further groups of hadrons were found. These are now known respectively as “charmed”, “bottom” and “top” particles. They have successively higher masses and shorter lifetimes than the strange hadrons, and they require successively higher energies to create them, but their properties are otherwise qualitatively similar to the strange hadrons. In addition, a third lepton was discovered, termed the “tau” or τ. All these particles appear to be as superfluous as the muon. In a series of lectures delivered at the University of Auckland in 1979 Feynman pondered the reason behind the existence of all these particles.13

Resonances

To complicate matters even further, other groups of hadrons termed “resonances” were discovered from the 1950s onwards by Enrico Fermi, Luis Alvarez and others. They have the shortest lifetimes of all, of order 10-23 sec, and they are clearly excited states of lighter hadrons. They are analogous to excited atoms. The latter exist because atoms have internal structures that can be excited. Likewise, the existence of excited hadrons, or resonances, suggests that hadrons have internal structures. They may be bound states of smaller objects.

In contrast, the three leptonic particles, the e, µ, and τ, appear to be point-like, fundamental particles. These particles are described by the equation of Dirac that he used to predict the existence of antimatter.

Naïve quark model

In 1964 Murray Gell-Mann of the California Institute of Technology attempted to put some order into the above-described “particle explosion” by assuming that the hadrons are bound states of a small set of fundamental building blocks that he termed “quarks”.14 A then-student at the same institute, George Zweig, came up with the same idea simultaneously, although Zweig referred to the building blocks as “aces”.15 Quarks (or aces) were assumed to be described by the above equation of Dirac that applies to electrons and muons.

For a number of reasons, the proposals of Gell-Mann and Zweig were regarded with some scepticism during the 1960s, largely because they assumed that the quarks or aces carried fractional electric charges, either -1/3 of +2/3 times the charge of the proton. At that time, there was no observational evidence for particles with fractional charges.

Gell-Mann closed his 1964 paper on quarks with the sentence “A search for stable quarks of charge -1/3 or +2/3 and/or stable di-quarks of charge -2/3 or +1/3 or +4/3 at the highest energy accelerators would help to reassure us of the non-existence of real quarks”.

In addition to the unprecedented assumption of fractional charges for quarks, Gell-Mann and Zweig did not invoke the presence of a force field to hold quarks together inside protons and other particles. They treated quarks as free particles. But if this were true, it would imply that protons and neutrons could be easily broken apart into their constituent quarks. The latter would be readily identifiable in experiments, as it is the electric charge of a particle that is universally used to detect its presence, and, according to the theory, quarks have very distinctive charges.

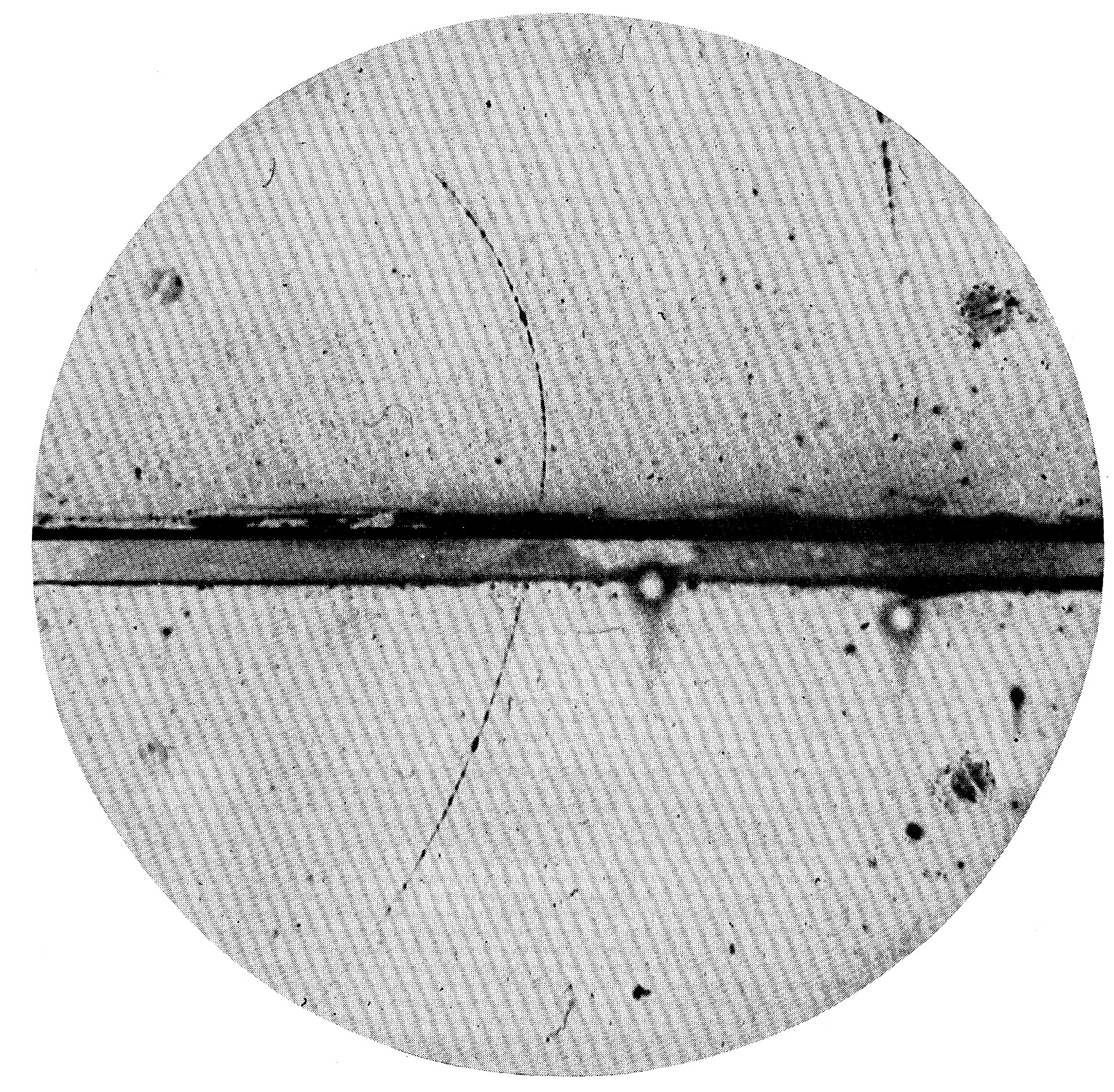

The tracks of the pion, the muon and the positron shown in Figures 2 and 5 above, for instance, are caused by the electric charges of these particles, and they are all integral. A particle with charge 1/3, for example, would leave an unmistakable track of 1/9th normal density. But such tracks have never been observed, despite diligent searches having been made, including in New Zealand.16,17

Hence Gell-Mann’s enigmatic remark above — fractional charges are not seen, and therefore we can be assured of the non-existence of real quarks. Eventually, the Gell-Mann-Zweig theory of the 1964 became known as the naïve quark model. It ushered in a dramatic change from previous thinking in particle physics.

Electron scattering

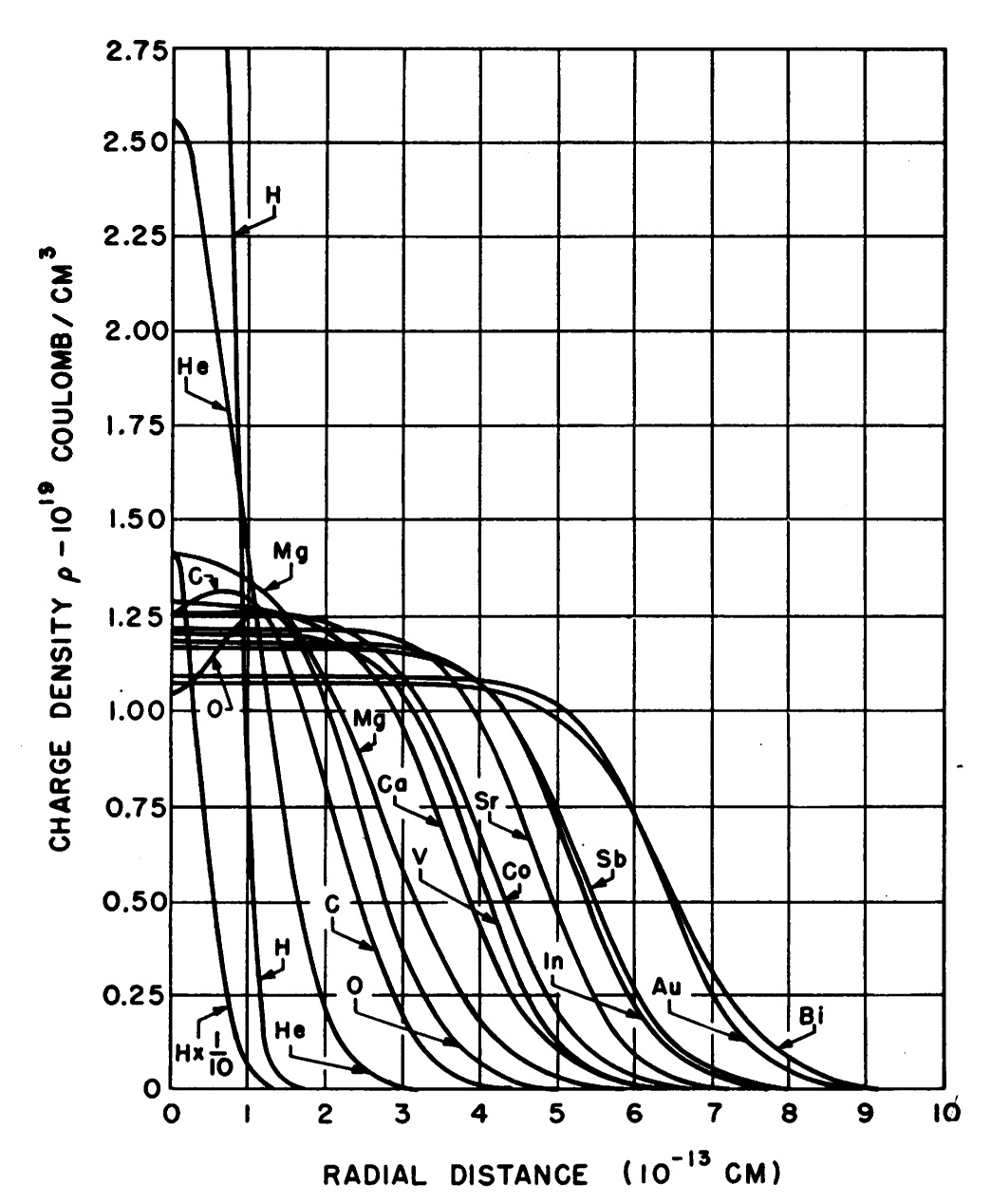

During the 1950s a series of beautiful experiments was carried out at Stanford University by Robert Hofstadter and his colleagues in which atomic nuclei were literally placed under the microscope.18 Hofstadter probed the structures of nuclei using a beam of moderately high-energy electrons (Figure 6). The technique was similar to that used by geologists and biologists in electron microscopy, and very high resolution was achieved, much greater than can be achieved with normal optical microscopy.

The analysis of Hofstadter’s data paralled Rutherford’s analysis of alpha particle scattering data from 40 years previously. Indeed, Hofstdater expressed admiration for Rutherford whose technique he was following. And, just as Rutherford’s results on atoms were indisputable, so also were Hofstadter’s results on their nuclei. Hofstadter was able to accurately and unambiguously measure the radii, and other characteristics, of atomic nuclei from the lightest to the heaviest, as shown in Figure 7.

SLAC

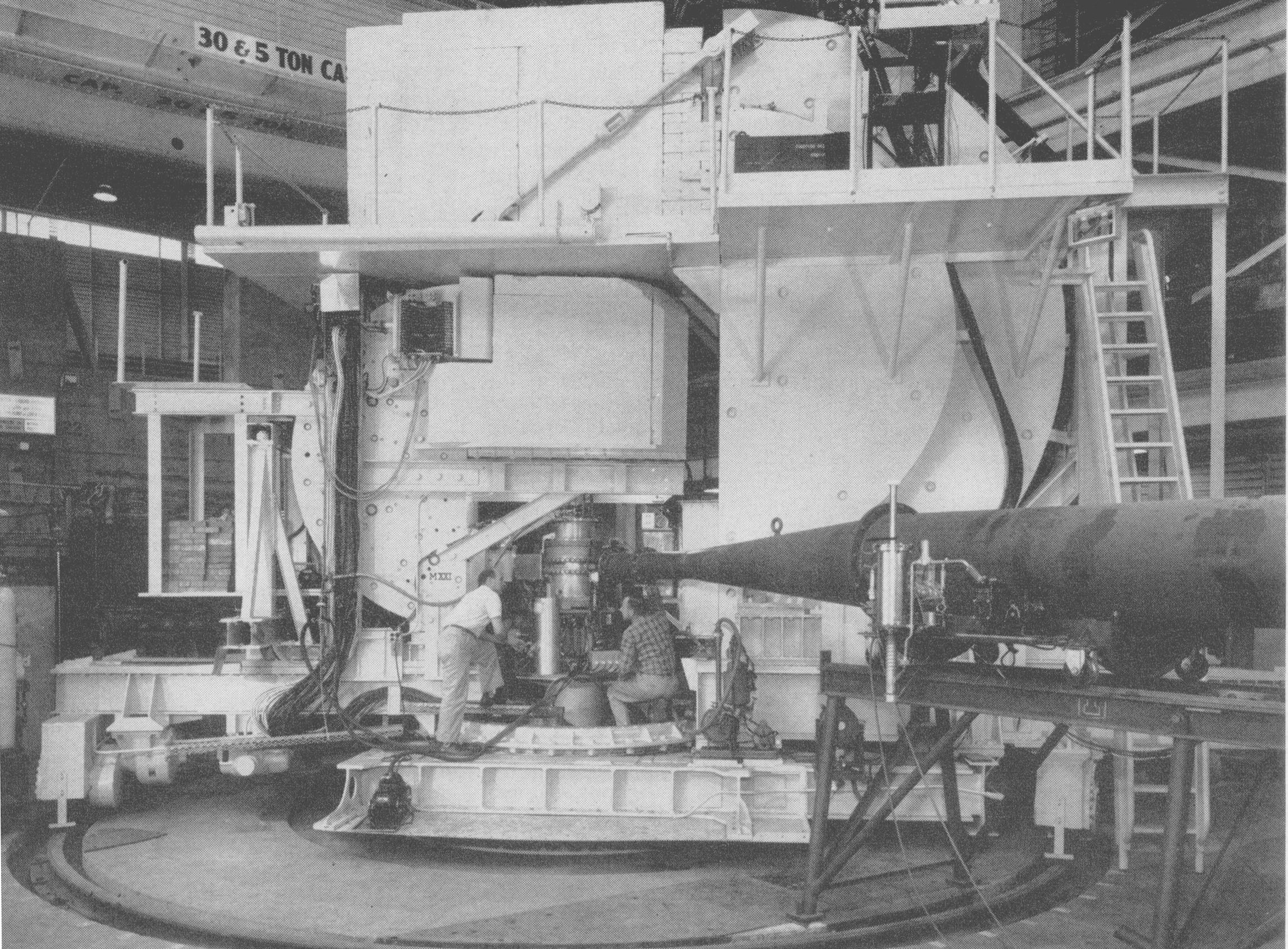

The best possible resolution that can be achieved in electron microscopy is predicted by de Broglie’s well-known relationship between the wavelength of a particle and its momentum, λ = h/p. The equation implies that the higher the momentum p of the electrons, the shorter their wavelength, and therefore the better the resolution. But high momentum corresponds to high energy. Hence, following Hofstadter, there was a case for increasing the energies of the electrons to improve the resolution.

To this end a two-mile (3 km) electron accelerator was built in the 1960s at Stanford, known as the Stanford Linear Accelerator or SLAC. It is shown in Figure 8. It accelerated electrons to energies of about 20 GeV, some 100 times higher than the energies employed by Hofstadter.

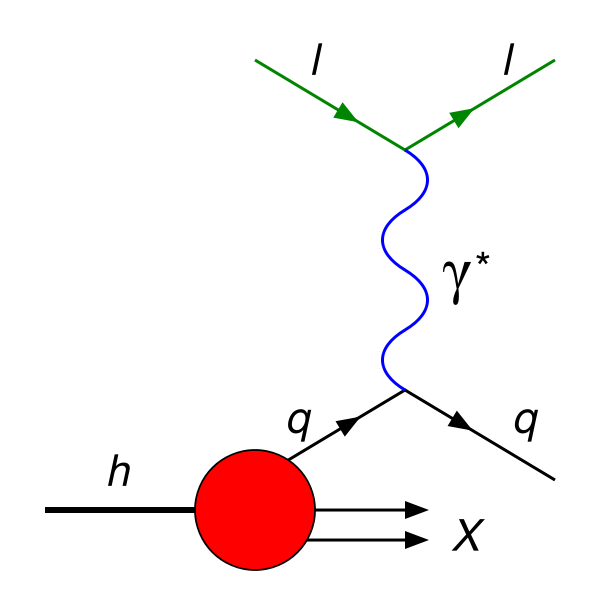

The analysis of the SLAC data that was popularly accepted at the time utilised Gell-Mann’s theory of free quarks described in the preceding section. According to the naïve quark model, a proton is composed of three fractionally charges particles, two with charges +2/3, and one with charge -1/3, to yield a net charge of +1. In the absence of forces holding the quarks together, the electron could scatter off any one of the three quarks as if it were a free particle, as shown in Figure 9.

This takes us back to Rutherford’s theory of the alpha-particle experiment. In that theory, the nucleus was treated as a free particle, because it was freely able to recoil when struck by a photon. On the other hand, in the SLAC experiments, the interacting quark (signified by q in Figure 9) is not free. If it were, it would escape, as shown in the figure, and the di-quark denoted by X in the figure would carry on in the forward direction. In other words, one would expect to see single quarks and di-quarks, both with fractional charges, emerging from collisions, one forwards and one sideways.

This was the motivation behind Gell-Mann’s remark quoted above, but it is not supported by observation. This problem is addressed in the following and subsequent sections.

Coloured quarks

The fact that fractionally charged particles are not observed to emerge from collisions such as that depicted in Figure. 9 guarantees that the treatment of quarks as free particles in the naïve quark model can, at best, be an approximation to reality.

In 1973 Gell-Mann and collaborators at Caltech and in Germany modified the quark hypothesis of 1964 by adding forces to the theory20. They assumed that quarks are dually charged particles, carrying both fractional electric charges and also a second type of charge which, for lack of a better name, they called “colour” charge.

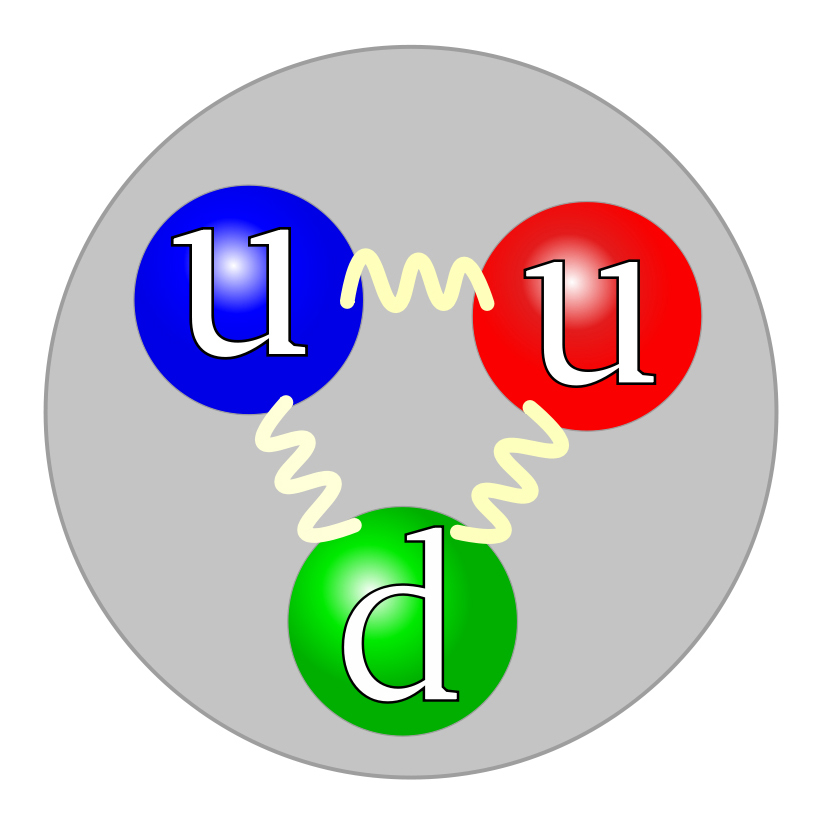

According to the 1973 theory, a proton, for example, is a bound state of three coloured quarks, one red, one blue and one green, two of which have electric charges +2/3, and one of which has an electric charge of -1/3, as shown in Figure 10.

It was assumed that colour charges are larger in magnitude than electric charges, and that forces between colour charges are correspondingly stronger than those between electric charges.

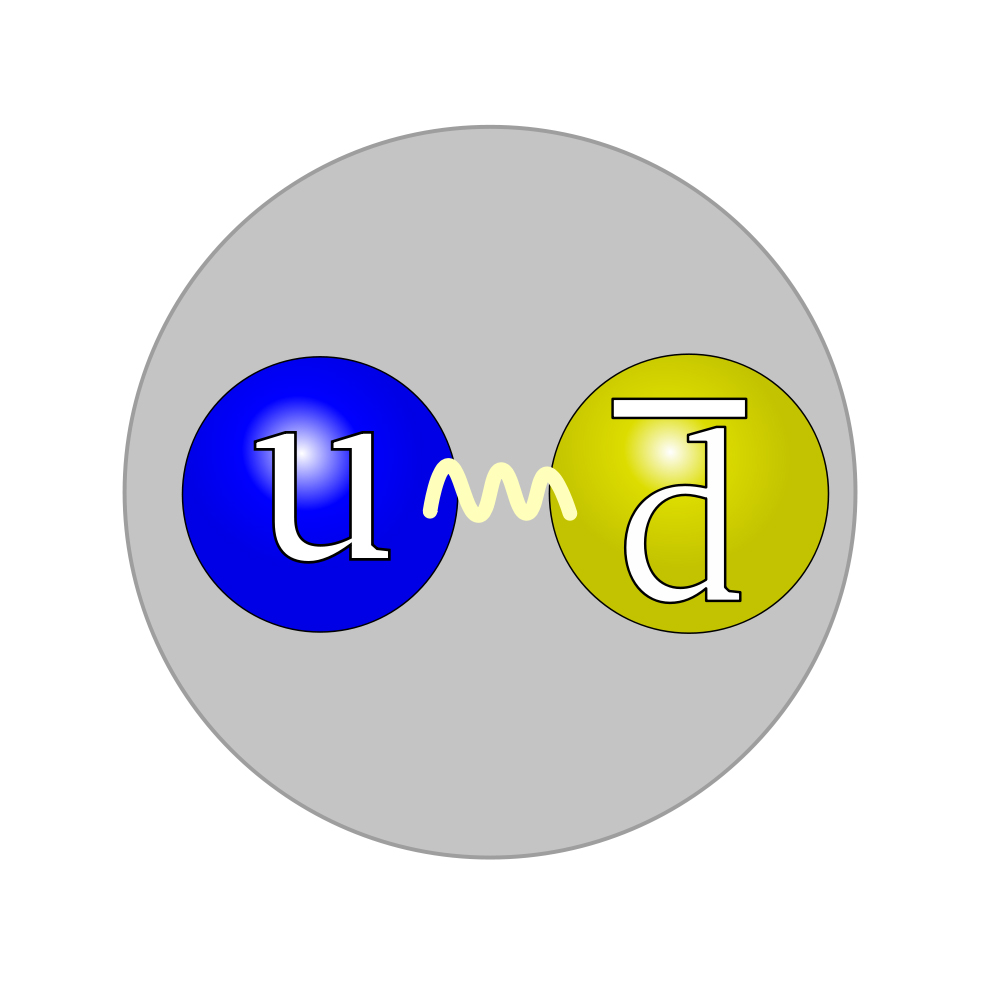

It was further assumed, by analogy to the electrical neutrality of atoms, that particles such as the proton are “colour neutral”. Two procedures for achieving colour neutrality were assumed - either three quarks of the primary colours as shown in Figure 10, or a quark-antiquark pair with opposite colours, as shown in Figure 11.

It was further assumed that the gluons are bi-coloured, carrying both a unit of colour charge and a unit of anti-colour charge. The charge of the gluon shown in Figure 11 could, for example, be blue-antigreen. If the blue u quark on the left emitted such a gluon, it would be converted to a green u quark. The gluon could then be absorbed by the yellow (i.e. antiblue) d antiquark on the right, thereby converting it to an antigreen (i.e. magenta) d antiquark.

The quarks comprising a positive pion (or any hadron) were assumed to be exchanging gluons all the time, thereby causing them to bind together. This is analogous to the constant exchanges of photons that occur between atomic electrons and the nucleus of an atom. These bind the electrons to the nucleus.

If now we reconsider the electron scattering process depicted in Figure 9 we may imagine final-state interactions occurring between the outgoing quark, which we arbitrarily assume here for sake of argument to be red, and the outgoing blue-green di-quark that reunite them into a single neutral, colourless object.

In principle, the above is possible, but, in practice, a different assumption for the final state is made, for ease of computation. In current state-of-the-art calculations it is assumed that the red quark converts (or “hadronizes”) to a spray or jet of normal integrally charged, colourless hadrons, and that the blue-green di-quark converts to another jet of normal hadrons.

However, as we learn at school, all known processes conserve electric charge, so in fact the above processes cannot actually occur as described above. Likewise, according to the theory, colour charge is conserved, so the above processes are doubly forbidden. This was the situation in 1973 when coloured quarks were first proposed, and it is the situation today, 40 years later.22 Feynman, one of the contributors to the theory, spoke of the theory as a “very tall house of cards”.23

The Standard Model

The major achievement of the coloured quark model of the 1970s was the impressive success it enjoyed in classifying the hundreds of then-known strange and charmed particles, including their resonances, as colourless three-quark or quark-antiquark combinations. This was analogous to Mendeleev’s classification of the chemical elements. In the 1980s and 1990s bottom and top particles were added, relatively seamlessly.

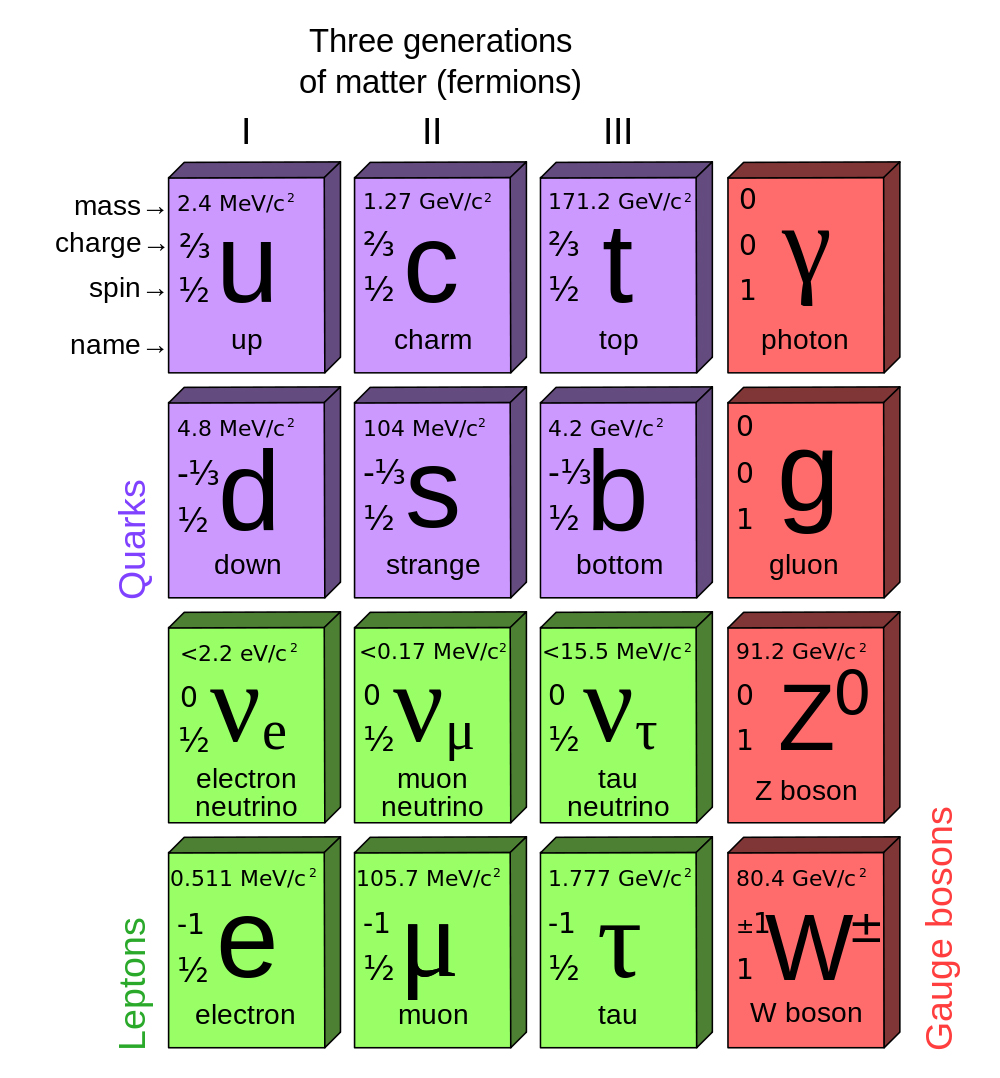

The current version of the model is shown schematically in Figure 12. This is known today as the “Standard Model” of physics. The three charged leptons, e, µ and τ, appear in the bottom row. Above them are three neutral partners named neutrinos that we have not been discussed above. The quarks are shown in purple in the top two rows. Each one comes in three colours, red, blue or green, yielding a total of 18. The photon is shown at the upper right. The gluon, the quantum of the colour field, is shown beneath the photon. There are a total of eight bi-coloured (blue-antigreen etc) gluons. The remaining particles, Z and W, are the quanta for interactions that give rise to beta-radioactivity. One further particle, the Higgs particle, is not shown in the table. It is discussed in the following section.

As mentioned above, the discoveries of strange particles, charmed particles, top and bottom particles were all puzzling. These puzzles re-emerge in the Standard Model through the presence of the second and third columns or generations of particles shown in Figure 12. Their presence is surprising. Virtually all of nuclear physics, atomic physics, chemistry, biology and everyday science involves particles from the first and fourth columns only of Figure 12. Rabi’s question from 1936 is not answered by the Standard Model.

The suggestion is sometimes made that particles from the second and third generations could account for the dominance of matter over antimatter in the universe25,26, but this has never been confirmed.

The equations that describe the interactions between the various particles are simple in principle, but complicated in practice. It is frequently claimed that they are in agreement with all observed phenomena, but this is an over-statement. There are disagreements. Some of these are qualitative, not merely quantitative. For example, the strange particles, K, Λ, Σ, Ξ and Ω-, decay some hundreds of times faster than anticipated. This is not a new problem.27-29

The Standard Model experiences further difficulties when confronted with the old measurements by Rutherford and Hofstadter on the sizes of atomic nuclei. As discussed above, their measurements were able to be explained by Yukawa’s theory of pions. However, when Yukawa formulated his theory, he assumed the pion was a point particle, and this is not the case according to the model depicted in Figure 11. According to the figure, the pion has a similar size to that of the proton. This forces one to abandon Yukawa’s theory if the Standard Model is adopted. This is puzzling, as it is not only the old historic observations that support Yukawa’s theory. Data from the higher-energy successor to SLAC are directly supportive.30

Other possible cracks have appeared. A few particles have been found that do not fit the quark classification. Some appear to be four-quark states, others five-quark states.31-34 They are problematical. If one admits a few four-quark or five-quark states, one should admit all of them, and there are literally thousands of possibilities. At the time of writing, this is an on-going puzzle. The evidence for one of these states appears in Figure 13.

But the chief puzzle presented by the Standard Model, to the author’s eye, are the surprising natures of its founding assumptions, and also its complexity. The fractional charges assumed for quarks have already been remarked upon. We don’t see such charges in the laboratory, and are therefore asked to assume they are trapped permanently inside particles like the proton. This is possible, but there is no precedent for confinement in science (even black holes radiate). The related process of “hadonization”, as discussed above, is another surprising ingredient of the model. It appears to violate the basic principle of the conservation of charge.

Overall, the model appears neither simple nor elegant.35 To a sceptical (some might say jaundiced) eye, it appears surprisingly complicated, especially in comparison to Rutherford’s model of the atom. Instead of becoming simpler as successive onion-skins are peeled away, the Standard Model appears to become more complicated. Others expressed comparable doubts. In his popular book on science,36 Bill Bryson quotes Leon Lederman as saying “there is a deep feeling the picture is not beautiful”.

Another puzzling problem is the one known as “renormalizability”. We consider this below.

The Higgs particle — symmetry breaker

As previously described, Aston showed how the binding energies of nuclei could be straightforwardly measured by subtracting their masses from the masses of their constituents.

In principle, the same could apply to quarks. If we weigh a proton, and also its constituents, the difference could be expected to yield its binding energy. We have noted that quarks are not observed to be dislodged from protons, even in high energy collisions. This suggests a high binding energy, which in turn suggests large masses for individual quarks.

However, model calculations based on the colour theory yield masses of the u and d quarks, of which the proton is assumed to be composed, that are low, just slightly larger than the mass of the electron.37 So the straightforward approach that Aston applied so successfully to nuclei seems not to apply to quarks.

The currently popular theory of the masses of quarks is due largely to UK theorist Peter Higgs.38 In this theory, an additional field is assumed to exist, the so-called Higgs field. This is the simplest possible field, a so-called “scalar” field. Such a field has the special capability of affecting the vacuum at all points in space in such a way that it can effectively impede the motions of particles through space. Particles that would otherwise travel at the speed of light, and hence be massless like the photon, are slowed to speeds less than the speed of light, and in so doing acquire mass. The slower a particle, the greater its mass. In this way, the Higgs field is assumed to generate the masses of particles.

The mathematics can be carried out, but the actual masses acquired by quarks and leptons cannot be predicted - they appear as free parameters in the theory. They must be determined empirically. The full range of quark and lepton masses found this way ranges over five orders of magnitude, from that of the electron to that of a gold atom.

The Higgs field, and its associated quantum, the Higgs particle, thus break the symmetry of the theory. Without the Higgs, all particles are massless and indistinguishable, with the Higgs, they acquire vastly different masses. As the US experimentalist Leon Lederman put it, “before the Higgs, symmetry and boredom; after the Higgs, complexity and excitement”.39 This may be likened to Picasso’s contribution to the world of art (Figure 14).

Picasso, of course, displayed multiple perspectives of a single scene simultaneously. The interpretation of the Higgs theory is not immediately obvious in physical terms, but probably it is not paralleled by Picasso’s use of multiple perspectives.

What can be said is that the Higgs theory impressively constrains the masses of the quanta that account for beta-type radioactivity, the W and Z particles shown in Figure 12, but at the cost of a parameter. The theory does not constrain the masses of the quarks or the leptons, these are left as free parameters that need to be determined empirically. It does, however, constrain the lifetimes of various particles, but here it runs into difficulty. As remarked above, the lifetimes of the strange particles (K, Λ, Σ, Ξ and Ω-) are predicted incorrectly.

The main implication of the theory is the existence of the quantum of the Higgs field, the Higgs particle. This has been the subject of intense experimental study by two groups using the Large Hadron Collider at CERN recently, ATLAS and CMS, and they have reported intriguing results.

Both groups have reported evidence for a particle with approximately the expected properties, but the productions rates are not quite as expected, and there are inconsistencies between the two sets of data. At the time of writing it appears that a “Higgs-like” particle has been found, but whether or not it is the particle predicted by the Standard Model, or something somewhat different, is an open question.40

The ATLAS and CMS groups have also searched for evidence of new phenomena predicted by theories in which the Standard Model is embedded in more extensive theories that aim to avoid the complexities of the Standard Model. To date, these searches have yielded blanks.41

This completes our very rapid and very incomplete tour of the main observations that led to the current Standard Model of physics. It is probably fair to say that the consensus opinion is that the model is neither simple nor elegant. Surprising assumptions are required, and inconsistencies with observations occur. Many parameters have to be determined empirically. Efforts to embed the theory in a more general theory that avoids the above issues have, as yet, not met with success.

Two alternative possibilities

In what follows two alternatives to the Standard Model which include features in common are briefly reviewed. One was proposed by Julian Schwinger,42 the other by the author.43 They were formulated simultaneously but independently. In view of the limited response they received in the literature, the present review is also brief. A more detailed review was given previously.44

Both theories were motivated by a former but well-known theory termed “Quantum Electrodynamics (QED)”. This was formulated largely by Schwinger, along with Richard Feynman, Freeman Dyson and Sin-Itiro Tomonaga, as described below.

Quantum electrodynamics, QED

QED is the quantum field theory of electrons, photons and positrons. It is a relativistic amalgamation of quantum mechanics and Maxwell’s equation, the latter equations being the 19th century equations of electricity and magnetism. Well known texts on QED were authored by Schwinger45 and Feynman.46

The theory may be simply illustrated with the use of so-called “Feynman diagrams”, such as the one shown in Figure 15. This shows an interaction between two electrons, so-called Möller scattering. One of the electrons spontaneously emits a photon. In so doing, it recoils. The photon is subsequently absorbed by the other electron and it then recoils. In this way the electron-electron interaction is accounted for by the exchange of a photon, and the conceptual problem of “action at a distance” is avoided.

Feynman derived straightforward and remarkably successful rules for calculating the probability for any such process.45,46 Agreement between theory and observation for any process involving electrons, photons and muons only is achieved, to nine significant figures when the data allow such comparison. This is the best known agreement in science between theory and observation.

But there is a cost. To achieve this unique accuracy, a process of “renormalization” has first to be applied to the equations. This process, which Feynman referred to as a “shell game” whilst in New Zealand,13 may not be mathematically self-consistent. This viewpoint was shared by Dirac.47

Without the renormalization process, nearly all calculated quantities turn out to be infinite, which is clearly unphysical. In the renormalization process, the original equations of the theory are rewritten in terms of physically measurable quantities only. This yields finite and correct results, but the infinities appear to have been swept under the rug.

A proposal by the author

During the 1960s Kennett Johnson, Marshall Baker and Raymond Willey attempted to formulate a finite version of QED in which infinite renormalizations would be unneeded. They avoided the conventional procedure of evaluating individual Feynman diagrams one-by-one. Instead, they summed infinite sequences of diagrams and showed, under various assumptions, that a finite theory might be able to be constructed if an “eigenvalue” equation for the “bare” electron charge was satisfied, where the bare charge is the electric charge of the electron prior to the renormalisation procedure being applied.

The result by Johnson, Baker and Willey was encouraging, but it seemed unlikely (to the author) that the observed electron charge could satisfy the equation, even approximately. In a natural system of units in which electric charge is dimensionless, the value of the observed charge is much less than unity, and it seemed that this would be an improbable solution to the eigenvalue equation.

A more plausible solution appeared to be one of order unity, but this raised the prospect of the existence of a particle with an electric charge much larger than that of the electron. This was the situation confronted by the author in the 1960s, when the naïve free-quark model of Gell-Mann and Zweig was proposed and Johnson, Baker and Willey reported their finding.

The author’s suggestion at the time was to attempt a simultaneous solution43 to three problems, viz. the free-quark and the renormalization problems, and also a “gauge problem” that was not discussed above. The proposal was to utilize the large charges hinted by the renormalization procedure to bind quarks together via Coulomb-like forces in a “gauge invariant” manner. This guaranteed that Coulomb’s Law would be correctly obeyed for particles such as protons and neutrons, a requirement that was troublesome for competing theories by Fermi and Chen Ning Yang49, Tsung-Dao Lee and Yang50, Jun John Sakurai51 and Schwinger52,53 at the time.

A model was constructed on the above foundation as follows.43 Quarks were assumed to be dually charged. One of the charges was large, the other conventional, but both were electric. The values of the charges were constrained by the above eigenvalue equation. The large charge was assumed to be responsible for binding quarks strongly together in a gauge invariant manner. Hadrons such as the proton and the neutron were assumed to be neutral composites with respect to this charge. Nuclear interactions between hadrons were assumed to be a residual effect of the quark binding interaction.

This was the first gauge invariant theory of strong interactions to be proposed, indeed it was the first theory to be proposed with any of the above properties. Four years later, Gell-Mann and co-workers20 proposed the theory of coloured quarks which included several of the above properties, and which eventually became the bedrock of today’s “Standard Model” of matter37.

The similarities between the two theories are quite striking. Thus, Gell-Mann’s group assumed quarks were dually charged. One charge was large, the other fractional. The former charge was colour, the latter electric. The values of the charges were determined empirically. The large charge was assumed to be responsible for binding quarks so strongly that they were permanently confined within hadrons such as protons and neutrons. Hadrons were assumed to be neutral composites with respect to this charge in a gauge invariant manner. Nuclear interactions between hadrons were assumed to be a residual effect of the quark binding interaction.

Despite the above commonalities, the 1969 model differed from the coloured quark model in a number of ways. Fractional charges were not assumed, and also confinement of quarks was not assumed. Instead, very strong binding was assumed. Yukawa’s theory of nuclear forces was retained, and multiple generations of leptons and quarks were found to be required. A potential solution to Rabbi’s old question thus seemed possible. In addition, the multiple generations of particles were found to be distinguishable. The need for imposed symmetry breaking between quarks and leptons thus seemed potentially avoidable.

The 1969 and 1973 theories differed further in the types of gauge invariance that were assumed. The 1969 theory by the author was based on the earliest known type of gauge invariance. This was the gauge invariance discovered by Herman Weyl54 in 1928 which nowadays is known technically as “Abelian” gauge invariance. It arises as an inevitable consequence of the 19th century equations of electricity and magnetism (Maxwell’s equations) and the 20th century foundation of quantum mechanics (Schrodinger’s equation).

On the other hand, in the colour theory of 1973 a “non-Abelian” gauge invariance that had originally been proposed by Yang and Robert Mills in another context55 was assumed. This was equivalent to assuming that the forces between pairs of quarks are the same, irrespective of their colours.

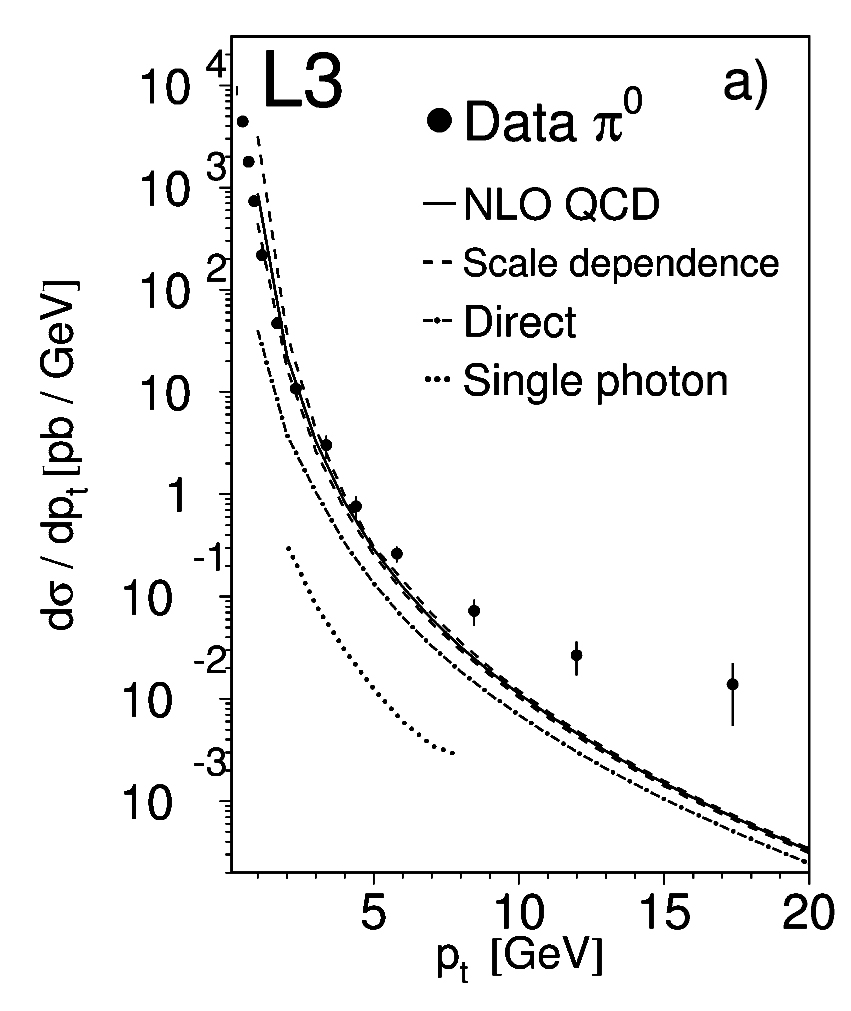

Intriguingly, possible evidence for large electromagnetic effects in electron-positron collisions was reported at CERN’s Large Electron Positron collider recently.56-60 A typical example appears in Figure 16.

Clearly, the above data are either seriously incorrect, or the Standard Model fails to describe the electron-positron interaction at high energies. If a larger electron-positron collider were built, as has been proposed, the above data could be checked at higher energies and higher transverse momenta.44

Schwinger’s proposal

In 1969 Schwinger formulated an alternative model that shared several features with the one described above.42 Schwinger’s starting point was Dirac’s theory of the magnetic monopole.61 In 1931 Dirac symmetrized Maxwell’s equations of electricity and magnetism by assuming the existence of a magnetic monopole (i.e. a single north or south pole). He found that, when the principles of quantum mechanics were applied to the symmetrized equations, the value of the magnetic pole strength was constrained to a particular value, and that this value was large.

Schwinger then assumed, in parallel to the discussion given above, that quarks are dually charged objects which carry electric as well as magnetic charge. He assumed that the strong attraction between north and south poles was responsible for binding quarks together in hadrons, and that hadrons are magnetically neutral.

From a phenomenological point of view, Schwinger thus arrived at similar conclusions to those given above, including multiple generations of distinguishable particles, and a high-energy threshold above which large electromagnetic effects should become apparent.

We note however that the monopole theory is not fully guage invariant and that it faces a problem with electric dipoles. Just as magnetic dipoles caused by circulating electric charges are familiar in normal circumstances, the circulation of monopoles would be expected to produce electric dipoles if monopoles exist. These would, moreover, be large if the magnetic pole strength is large. However, such electric dipoles are not seen, despite sensitive searches having been mounted.

Experimental tests of the alternative theories

As remarked above, electron-positron colliders present a possible means for testing the above models through their predictions of large electromagnetic effects at high energies. At the time of writing, planning is well advanced for a linear electron-positron collider that would operate at higher energies than those of CERN’s previous electron-positron collider.44 Unfortunately, however, funding has not yet been secured.

For completeness we mention an alternative possibility. This would be to convert the Large Hadron Collider to a very high energy electron-positron (or electron-electron) collider using the proton-driven plasma-wakefield acceleration technique.62 This might offer the most economical means for achieving high-energy collisions between electrons and positrons (and electrons and electrons), but we note that further technical advances are required before it could become a feasible possibility.

Conclusions

The current Standard Model of particle physics is not without its problems. Its founding assumptions are surprising and it does not enjoy quantitative success in all spheres. Some of the earliest observations dating back to the first half of the last century remain outside the model. Some calculational procedures used to test the model are questionable, in particular the simultaneous treatment of quarks as free yet confined particles. Efforts to embed the theory in a more aesthetically appealing framework have not, as yet, been fruitful.

Alternatives to the Standard Model are conceivable. Two have been presented in this essay. Both are testable, and others are surely possible.

In 1995 Gell-Mann authored an essay on the conformability of nature in which he considered physics being carried out by seven-tentacled beings on distant planets.63 He argued they would likely be following similar paths to those being followed here on Earth.

Interestingly, 1995 was an epochal year in astronomy. It marked the discovery of the first exoplanet orbiting a Sun-like star.64 Since then more than a thousand exoplanets have been found in an effort that has involved New Zealanders.7,65

The above discoveries, together with current plans for very large radio telescopes, have re-awakened prospects for making contact with advanced civilizations in the Galaxy.66 If these prospects can be brought to fruition, perhaps then we will be able to compare our efforts on Earth to understand the fundamental nature of matter with those of our distant cousins.

References

[1] E. Rutherford, “The scattering of α and β particles by matter and the structure of the atom”, Philosophical Magazine 21, 669-688 (1911).[2] Wikipedia, “Rutherford model”, http://en.wikipedia.org/wiki/Rutherford_model

[3] F. W. Aston, “A positive ray spectrograph”, Philosophical Magazine 38, 707-714 (1919).

[4] H. Yukawa, “On the interaction of elementary particles”, Proceedings of the Physico-Mathematical Society of Japan 17, 48 (1935).

[5] C. Lattes, G. Occhialini, C. Powell, “Observations on the tracks of slow mesons in photographic emulsions”, Nature 160, 453-456 (1947).

[6] Wikipedia, “Victor Hess”, http://en.wikipedia.org/wiki/Victor_Francis_Hess

[7] P.C.M. Yock, “A quarter century of astrophysics with Japan”, NZ Science Review 69, 61-70 (2012).

[8] M. Ackermann et al., “Detection of the characteristic pion-decay signature in supernova remnants”, Science 339, 807-811 (2013).

[9] S. Neddermeyer, C. Anderson, “Note on the nature of cosmic ray particles”, Physical Review 51, 884-891 (1937).

[10] C. Anderson, “The positive electron”, Physical Review 43, 491-494 (1933).

[11] P.A.M. Dirac, “The quantum theory of the electron”, Proceedings of the Royal Society of London A 117, 610-624 (1928).

[12] G.D. Butler, C.C. Rochester, “Evidence for the existence of new unstable particles”, Nature 160, 855-857 (1947).

[13] R.P. Feynman, QED — The strange theory of light and matter, Princeton University Press (1985).

[14] M. Gell-Mann, “A schematic model of baryons and mesons”, Physics Letters 8, 168-169 (1964).

[15] G. Zweig, “Memories of Murray and the quark model”, International Journal of Modern Physics A25, 3863-3877 (2010).

[16] G.D. Putt, P.C.M. Yock, “Search for fractional charge in Tungsten”, Physical Reviewe 17, 1466-1467 (1978).

[17] P.C.M. Yock, “Heavy cosmic rays at sea level”, Physical Review 34, 698-706 (1986).

[18] R. Hofstadter, “Nuclear and nucleon structure”, W.A. Benjamin Inc. (1963).

[19] Wikipedia, “SLAC”, http://en.wikipedia.org/wiki/SLAC_National_Accelerator_Laboratory

[20] H. Fritzsch, M. Gell-Mann, H. Leutwyler, “Advantages of the color octet gluon picture”, Physics Letters 47B, 365-368 (1973).

[21] Wikipedia, “Quark”, http://en.wikipedia.org/wiki/Quark

[22] D. Gross, F. Wilczek, “Watershed: the emergence of QCD”, CERN Courier, January/February (2013).

[23] R.P. Feynman, “Photon-Hadron interactions”, W. A. Benjamin, Reading MA, (1972).

[24] Wikipedia, “Standard Model”, http://en.wikipedia.org/wiki/Standard_Model

[25] A.D. Sakharov, “Violtaion of CP invariance, C asymmetry, and baryon asymmetry of the universe”, Journal of Experimental and Theoretical Physics 5, 24-27 (1967).

[26] M. Kobayashi, T. Maskawa, “CP violation in the renormalizable theory of weak interactions”, Progress of Theoretical Physics 49, 652-657 (1973).

[27] P.C.M. Yock, “Theory of highly charged subnuclear particles”, Annals of Physics (New York) 61, 315-328 (1970).

[28] H.Y. Cheng, “Status of the ∆I = ½ rule in kaon decays”, International Journal of Modern Physics A4, 495-582 (1989).

[29] P.A. Boyle, N.H. Christ, N. Garros et al., “Emerging understanding of the ∆I = ½ rule from lattice QCD”, Physical Review Letters 110, 152001 (2013).

[30] M. Derrick et al., “Observation of events with an inelastic forward neutron in deep inelastic scattering at HERA”, Physics Letters B 384, 388-400 (1996).

[31] D. Acosta et al., “Observation of a narrow state X(3872) in pp collisions at √s = 1.96 TeV”, Physical Review Letters 93, 072001 (2004).

[32] A. Bondar et al., (Belle Collaboration), “Observation of two charged bottomiumlike resonances in Y(5S) decays”, Physical Review Letters 108, 122001 (2102).

[33] Z.Q. Liu et al., (Belle Collaboration), “Study of e+e-àπ+ π-J/ψ and observation of a charged charmonium state at Belle”, Physical Review Letters 110, 252002 (2013).

[34] M. Ablikim et al., (BESIII Collaboration), “Observation of a charged charmonium structure in e+e-àπ+ π-J/ψ at √s = 4.26 GeV”, Physical Review Letters 110, 252001 (2013).

[35] S.L. Glashow, L.M. Lederman, “The SSC: a machine for the Nineties”, Scientific American, 3, 28-37 (1985).

[36] W.M. Bryson, A Short History of Nearly Everything, Doubleday (2005).

[37] H. Fritzsch, “The history of QCD”, CERN Courier, October (2012).

[38] P.W. Higgs, “Broken symmetries and the masses of gauge bosons”, Physical Review Letters 13, 508-509 (1964).

[39] L.M. Lederman, The God Particle, Dell Publishing, New York NY (1993).

[40] ATLAS Collaboration, “An update of combined measurements of the new Higgs-like Boson with high mass resolution channels”, ATLAS-CONF-2012-170 (2012).

[41] M. Chalmers, “Truant particles turn the screw on supersymmetry”, Nature 491, 505-506 (2012).

[42] J. Schwinger, “A magnetic model of matter”, Science 165, 757-761 (1969).

[43] P.C.M. Yock, “Unified field theory of quarks and electrons”, International Journal of Theoretical Physics 2, 247-254 (1969).

[44] P.C.M. Yock, “The gamma-gamma interaction: a critical test of the standard model”, International Journal of Modern Physics A 24, 3276-3285 (2009).

[45] J. Schwinger, Quantum Electrodynamics, Dover Publications (1958).

[46] R.P. Feynman, Quantum Electrodynamics, W.A. Benjamin (1962).

[47] P.A.M. Dirac, “The evolution of the physicist’s picture of nature”, Scientific American 208, May (1963).

[48] K. Johnson, M. Baker, K. Willey, “Self-energy of the electron”, Physical Review 136, B1111-B1120 (1964).

[49] E. Fermi, C.N. Yang, “Are mesons elementary particles?”, Physical Review 76, 1739-1743 (1949).

[50] C.N. Yang, T.D. Lee, “Conservation of heavy particles and generalized gauge transformations”, Physical Review 98, 1501 (1955).

[51] J.J. Sakurai, “Theory of strong interactions”, Annals of Physics (NY) 11, 1-48 (1960).

[52] J. Schwinger, “Gauge invariance and mass”, Physical Review 125, 397-398 (1962).

[53] J. Schwinger, “Field theory of matter”, Physical Review 135, B816-B830 (1965).

[54] H. Weyl, Gruppentheorie und Quantenmechanik, Leipzig, Hirzel (1928).

[55] C.N. Yang, R.L. Mills, “Conservation of isotopic spin and isotopic gauge invariance”, Physical Review 96, 191-195 (1954).

[56] P. Achard et al., (L3 Collaboration), “Inclusive neutral pion and kaon roduction in two-photon collisions at LEP”, Physics Letters B 524, 44-54 (2002).

[57] P. Achard et al., (L3 Collaboration), “Inclusive charged hadron production in two-photon collisions at LEP”, Physics Letters B 554, 105-114 (2003).

[58] P. Achard et al., (L3 Collaboration), “Inclusive jet production in two-photon collisions at LEP”, Physics Letters B 602, 157-166 (2004).

[59] G. Abbiendi et al., (OPAL Collaboration), “Inclusive production of charged hadrons in photon-photon collisions”, Physics Letters B 651, 92-101 (2007).

[60] G. Abbiendi et al. (OPAL Collaboration), “Inclusive jet production in photon-photon collisions from 189 to 209 GeV”, Physics Letters B 658, 185-192 (2008).

[61] P.A.M Dirac, "Quantised singularities in the electromagnetic field", Proceedings of the Royal Society (London), A 133, 60-73 (1931).

[62] P.C.M Yock, “A plasma-wakefield collider at the LHC?” CERN Courier, June (2009).

[63] M. Gell-Mann, “Nature conformable to herself”, in Selected Papers, ed. H. Fritzsch, World Scientific (2009).

[64] M. Mayor, D. Queloz, “A Jupiter-mass companion to a solar-type star”, Nature 378, 355–359 (1995).

[65] F. Abe et al., “Extending the planetary mass function to earth mass by gravitational microlensing at moderate magnification”, Monthly Notices of the Royal Astronomical Society 431, 2975-2985 (2013).

[66] A.P.V. Siemion et al., “A 1.1 to 1.9 GHz SETI survey of the Kepler field: I. A search for narrow-band emission from select targets”, Astrophysical Journal 767, 94 (2013).